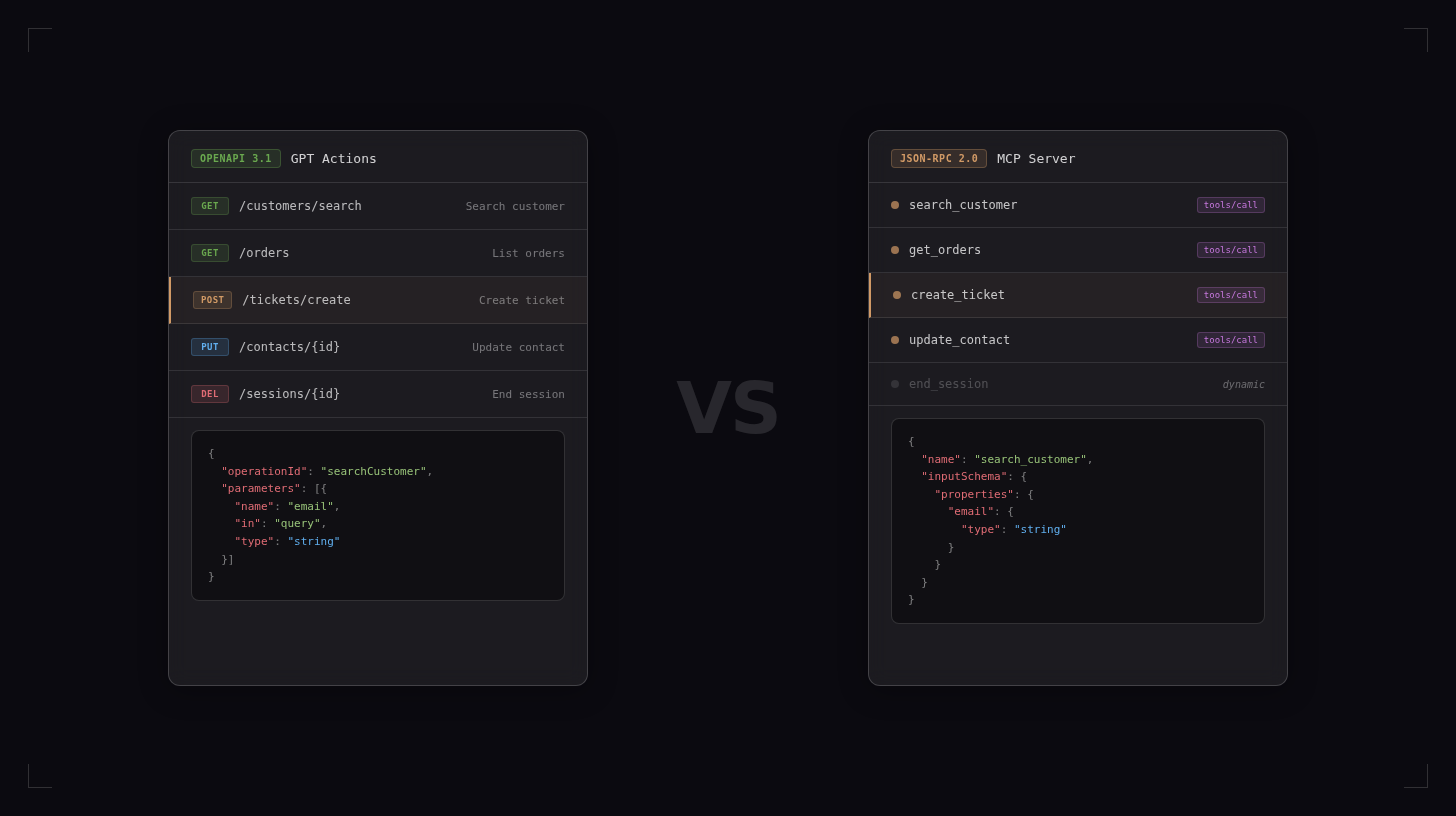

OpenAI’s GPT Actions and Anthropic’s Model Context Protocol (MCP) solve the same fundamental problem: letting an AI model call external tools and APIs during a conversation. They solve it in very different ways.

This post is a technical comparison of both approaches. It covers architecture, protocol mechanics, authentication, tool discovery, and the trade-offs that matter when choosing between them (or using both).

What are GPT Actions?

GPT Actions are OpenAI’s mechanism for letting Custom GPTs (and, more broadly, assistants built via the OpenAI API) call external HTTP APIs. The concept shipped with the GPT Store in late 2023 and evolved from the earlier ChatGPT Plugins system.

The core idea: you provide an OpenAPI specification (version 3.0 or 3.1) that describes your API’s endpoints, parameters, and response schemas. OpenAI’s system parses this spec and presents the endpoints as callable tools to the model. When the model decides to invoke one, OpenAI’s servers make the HTTP request on behalf of the user.

Here is a minimal OpenAPI schema that defines a single action for looking up a customer by email:

openapi: "3.1.0"

info:

title: "Customer Lookup API"

version: "1.0.0"

servers:

- url: "https://api.example.com"

paths:

/customers/search:

get:

operationId: "searchCustomer"

summary: "Search for a customer by email"

parameters:

- name: "email"

in: "query"

required: true

schema:

type: "string"

format: "email"

description: "The customer's email address"

responses:

"200":

description: "Customer found"

content:

application/json:

schema:

type: "object"

properties:

id:

type: "string"

name:

type: "string"

email:

type: "string"

plan:

type: "string"You paste or upload this schema into the GPT Builder (or reference it via URL), configure authentication, and the Custom GPT can now call GET /customers/search?email=... whenever the model deems it relevant.

Key architectural properties of GPT Actions:

- Server-side execution: OpenAI’s infrastructure makes the outbound HTTP call. Your API receives the request from OpenAI’s IP range, not from the end user’s browser.

- OpenAPI as the schema format: The model uses the

operationId,summary, anddescriptionfields from the OpenAPI spec to understand when and how to call each endpoint. - REST/HTTP only: Actions are limited to standard HTTP methods (GET, POST, PUT, DELETE, PATCH) over HTTPS.

- Authentication options: OAuth 2.0, API key (sent as a header or query parameter), or no auth. OpenAI handles the OAuth token exchange flow on the user’s behalf.

- Static tool definitions: The set of available actions is fixed at configuration time. The model cannot discover new endpoints at runtime.

What is MCP?

Model Context Protocol (MCP) is an open protocol, published by Anthropic in late 2024, that standardizes how AI applications communicate with external tools and data sources. It is not tied to any specific model or vendor.

We wrote a complete guide to how MCP works that covers the protocol from first principles. The short version: MCP defines a client-server architecture where an AI application (the MCP client) connects to one or more MCP servers, discovers available tools via a tools/list request, and invokes them via tools/call messages. Communication uses JSON-RPC 2.0.

Here is the same customer lookup tool expressed as an MCP tool definition:

{

"name": "search_customer",

"description": "Search for a customer by email address",

"inputSchema": {

"type": "object",

"properties": {

"email": {

"type": "string",

"description": "The customer's email address"

}

},

"required": ["email"]

}

}This definition lives inside an MCP server (a small program that wraps your business logic). When an MCP client sends a tools/call request with name: "search_customer" and arguments: {"email": "user@example.com"}, the server executes whatever code is behind that tool (an API call, a database query, a file read) and returns the result.

Architecture comparison

The two approaches differ in where code runs, how communication flows, and what abstractions they expose.

GPT Actions: centralized, HTTP-native

User <-> ChatGPT (OpenAI servers) --HTTPS--> Your API serverThe model and the action executor live together on OpenAI’s side. When the model decides to call an action, OpenAI’s backend constructs an HTTP request based on the OpenAPI spec, sends it to your API, receives the response, and feeds the result back to the model. The user’s client (browser, mobile app) never communicates directly with your API.

This means:

- Your API must be publicly accessible over HTTPS (or at least reachable from OpenAI’s infrastructure).

- Latency includes a round-trip from OpenAI to your server and back.

- All request/response data passes through OpenAI’s servers.

MCP: decentralized, protocol-native

User <-> AI Application (MCP Client) <--JSON-RPC--> MCP Server <-> Your API / DB / serviceThe MCP client runs wherever the AI application runs. For Claude Desktop, that is on the user’s machine. For a cloud-hosted AI agent (like Quickchat AI), the MCP client runs on the agent’s backend. The MCP server can run locally (communicating over stdio), on the same network, or remotely over HTTP with Server-Sent Events (SSE) or the newer Streamable HTTP transport.

This means:

- MCP servers can access local resources (files, databases, localhost services) that are not exposed to the public internet.

- Communication is bidirectional. Servers can send notifications to clients (e.g., when a resource changes).

- The protocol is transport-agnostic. It works over stdio pipes, SSE, or plain HTTP.

Comparison table

| Dimension | GPT Actions | MCP |

|---|---|---|

| Vendor lock-in | OpenAI only. Works exclusively within ChatGPT and the OpenAI API. | Vendor-agnostic. Supported by Claude, Cursor, VS Code, Windsurf, Quickchat AI, and others. |

| Protocol | OpenAPI 3.x over REST/HTTP. | JSON-RPC 2.0 over configurable transports. |

| Transport | HTTPS only. Your API must be publicly reachable. | stdio (local), SSE (remote), Streamable HTTP (remote). Local servers need no public endpoint. |

| Authentication | OAuth 2.0, API key (header or query param), or none. Managed by OpenAI. | Token-based (Bearer tokens in headers), custom headers, or none. Managed by the client application. |

| Tool discovery | Static. You upload or link an OpenAPI schema at configuration time. The model sees whatever is in the spec. | Dynamic. The client sends tools/list at runtime and the server responds with currently available tools. Tools can change between sessions. |

| Tool schema format | OpenAPI operation definitions (paths, methods, parameters, request bodies, response schemas). | JSON Schema for input parameters. Tool definitions include name, description, and inputSchema. |

| Execution location | OpenAI’s servers make the HTTP call. | The MCP client (or server) executes the call. Location depends on where the client and server run. |

| Bidirectionality | Unidirectional. The model calls your API; your API cannot push data back outside of the HTTP response. | Bidirectional. Servers can send notifications and resource-change events to clients. |

| State management | Stateless HTTP requests. No persistent connection between calls. | Stateful sessions. The client-server connection persists across multiple tool calls within a session. |

| Ecosystem | Closed. The spec format (OpenAPI) is open, but the execution environment is proprietary. | Open. The protocol specification is public. Anyone can build clients and servers. |

| Response format | Standard HTTP responses (JSON, text, etc.) parsed by OpenAI. | Structured MCP content blocks (text, images, embedded resources). |

Technical deep dive: a sample integration

To make the differences concrete, consider a common task: querying a PostgreSQL database for customer orders and returning the results to the user.

GPT Actions approach

You need to build and host an HTTP API in front of your database:

# Flask API server (simplified)

from flask import Flask, request, jsonify

import psycopg2

app = Flask(__name__)

@app.route("/orders", methods=["GET"])

def get_orders():

customer_email = request.args.get("email")

conn = psycopg2.connect("dbname=mydb user=myuser")

cur = conn.cursor()

cur.execute(

"SELECT order_id, product, amount, status FROM orders WHERE customer_email = %s",

(customer_email,)

)

rows = cur.fetchall()

cur.close()

conn.close()

return jsonify([

{"order_id": r[0], "product": r[1], "amount": float(r[2]), "status": r[3]}

for r in rows

])Then you write an OpenAPI spec for this endpoint, deploy the API with TLS, configure authentication, and paste the spec into the GPT Builder. OpenAI handles the rest: it reads the spec, shows the model the available operation, and makes the HTTP call when needed.

The total setup involves: a running API server, a public HTTPS endpoint, an OpenAPI spec, and the GPT Action configuration in OpenAI’s UI.

MCP approach

You write an MCP server that wraps the same database query:

# MCP server using the mcp Python SDK

from mcp.server import Server

from mcp.types import Tool, TextContent

import psycopg2

import json

server = Server("orders-server")

@server.list_tools()

async def list_tools():

return [

Tool(

name="get_orders",

description="Look up orders for a customer by email",

inputSchema={

"type": "object",

"properties": {

"email": {

"type": "string",

"description": "Customer email address"

}

},

"required": ["email"]

}

)

]

@server.call_tool()

async def call_tool(name: str, arguments: dict):

if name == "get_orders":

conn = psycopg2.connect("dbname=mydb user=myuser")

cur = conn.cursor()

cur.execute(

"SELECT order_id, product, amount, status FROM orders WHERE customer_email = %s",

(arguments["email"],)

)

rows = cur.fetchall()

cur.close()

conn.close()

results = [

{"order_id": r[0], "product": r[1], "amount": float(r[2]), "status": r[3]}

for r in rows

]

return [TextContent(type="text", text=json.dumps(results, indent=2))]If the MCP server runs locally (over stdio), it can connect directly to a database on localhost or the local network. No public endpoint needed. If the server runs remotely, it needs an HTTP endpoint, but it communicates using JSON-RPC over SSE or Streamable HTTP rather than raw REST.

The total setup for local use involves: the MCP server script, the MCP client configuration (pointing to the server’s command), and a database connection. No OpenAPI spec, no TLS certificate, no public-facing API.

Key differences in practice

The GPT Actions path requires more infrastructure (a deployed HTTP service) but leverages existing REST API patterns that most teams already know. If you already have a REST API, adding a GPT Action is mostly a matter of writing the OpenAPI spec.

The MCP path requires less infrastructure for local use cases but introduces a new protocol that your team needs to learn. For remote deployments, the infrastructure requirements are similar to GPT Actions, but the communication model is different (persistent connections, JSON-RPC framing, bidirectional notifications).

How Quickchat AI handles both approaches

Quickchat AI’s AI Actions system supports two distinct action types that map to these two paradigms: HTTP Request Actions and Remote MCP Actions.

HTTP Request Actions

HTTP Request Actions are conceptually similar to GPT Actions. You configure an HTTP endpoint, method, headers, query parameters, and a JSON body template. The AI Agent collects parameter values from the conversation and the Quickchat backend makes the HTTP request server-side.

An HTTP Request Action consists of:

- An endpoint URL with

{{parameter}}placeholders (e.g.,https://api.hubapi.com/crm/v3/objects/contacts) - An HTTP method (GET, POST, PATCH, PUT, DELETE)

- Headers (including authorization tokens substituted server-side)

- A JSON body template with parameter placeholders

- Query parameters with placeholders

- A set of typed parameters (string, number, boolean, float) with descriptions, required flags, and default values

At execution time, the AI determines which parameters to fill based on conversation context. The backend injects parameter values into the URL, headers, body, and query string, replacing {{param_name}} placeholders. It then makes the HTTP request and returns the response to the AI for interpretation.

For a detailed example, see our HubSpot AI Actions guide.

Remote MCP Actions

Remote MCP Actions connect the AI Agent to an external MCP server. Instead of defining individual HTTP endpoints, you provide the MCP server’s URL and optional authentication credentials (a bearer token and/or custom headers). The Quickchat backend acts as an MCP client: it connects to the remote server, discovers available tools via the standard tools/list protocol call, and presents them to the AI model.

The configuration requires:

- The MCP server URL (an HTTPS endpoint serving the MCP Streamable HTTP or SSE transport)

- An optional authentication token

- Optional custom headers

- A list of discovered tools with individual active/inactive toggles

- A default tool activation setting (whether newly discovered tools are active by default)

Once connected, each tool exposed by the MCP server becomes available to the AI Agent during conversations. The AI sees the tool names and descriptions from the MCP server’s tools/list response and can invoke them as needed. The tool selection can be narrowed using the allowed_tools configuration, which lets you enable or disable specific tools from the server.

This approach is particularly useful when integrating with services that already publish MCP servers (like Shopify’s MCP) because you can connect to the existing server rather than building individual HTTP actions for each endpoint.

When to use which in Quickchat

Use HTTP Request Actions when:

- You are integrating with a specific REST API endpoint

- You want fine-grained control over the request format (headers, body structure, query params)

- The API does not have a published MCP server

- You need server-side credential injection (like OAuth tokens for HubSpot, Zendesk, etc.)

Use Remote MCP Actions when:

- The service publishes an MCP server

- You want to connect to multiple tools from a single service in one action

- You want dynamic tool discovery (the tools available to the AI can change as the MCP server updates)

- You are building a multi-agent system where one AI delegates to another via MCP

Pros and cons

GPT Actions

Advantages:

- Familiar technology. If you have an existing REST API, you already have most of what you need. Writing an OpenAPI spec is well-documented and tooling is mature.

- The OpenAPI format is widely adopted. Specs can be auto-generated from many web frameworks (FastAPI, Express with swagger-jsdoc, Spring Boot with springdoc).

- OpenAI handles the HTTP request execution, TLS termination, and error handling on their infrastructure.

- OAuth 2.0 support is built in with automatic token refresh.

Disadvantages:

- Locked to OpenAI’s ecosystem. An action you build for a Custom GPT cannot be reused with Claude, Gemini, or any other model provider without reimplementation.

- Your API must be publicly accessible. No support for local or private-network resources.

- No dynamic tool discovery. Adding a new endpoint requires updating the OpenAPI spec and reconfiguring the GPT.

- Unidirectional only. Your API cannot push updates or notifications back to the model outside of HTTP responses.

- All data passes through OpenAI’s servers, which may be a concern for sensitive workloads.

- Rate limits and execution timeouts are controlled by OpenAI, not by you.

MCP

Advantages:

- Vendor-agnostic. The same MCP server works with Claude, Cursor, Quickchat AI, and any other client that implements the protocol.

- Supports local resources. An MCP server running over stdio can access files, databases, and services on the local machine without exposing anything to the internet.

- Dynamic tool discovery. The AI can query the server for available tools at runtime. Tools can be added, removed, or updated without reconfiguring the client.

- Bidirectional communication. Servers can notify clients of changes (e.g., a file was modified, a new record was created).

- Stateful sessions. The connection persists across multiple tool invocations within a conversation, enabling workflows that require context from previous calls.

- Open specification. Anyone can inspect, implement, or extend the protocol.

Disadvantages:

- Younger ecosystem. The protocol was published in late 2024, so tooling, documentation, and community knowledge are still developing compared to OpenAPI.

- Requires running an MCP server. For remote deployments, this means hosting an additional service (though it can be lightweight).

- No standardized authentication specification (as of early 2026). Implementations vary between token-based auth, custom headers, and OAuth extensions. The MCP Authorization spec is still evolving.

- The JSON-RPC framing adds a layer of abstraction that may feel unfamiliar to teams used to REST.

- Client support varies. Not all AI applications support all MCP transports or features.

When to use which

The choice depends on your constraints:

Choose GPT Actions if:

- You are building exclusively for ChatGPT or the OpenAI API.

- You already have a public REST API with an OpenAPI spec.

- You need OAuth 2.0 handled for you by the platform.

- You want the simplest path from “existing API” to “AI-callable tool.”

Choose MCP if:

- You need your tools to work across multiple AI providers.

- You want to expose local or private-network resources to an AI without making them public.

- You need dynamic tool discovery, bidirectional communication, or stateful sessions.

- You are building a multi-agent architecture where different AI systems need to coordinate.

Use both if:

- You have an existing REST API that you want to expose as a GPT Action for ChatGPT users, but you also want to publish an MCP server for Claude, Cursor, and other clients. The underlying business logic can be shared; the protocol adapter is a thin layer on top.

The convergence trend

GPT Actions and MCP started from different design philosophies (HTTP-native vs. protocol-native), but the gap is narrowing.

OpenAI added MCP support to the Agents SDK in March 2025, allowing OpenAI-powered agents to connect to MCP servers alongside traditional function calling. This does not replace GPT Actions (which remain the way to add tool calling to Custom GPTs in ChatGPT), but it signals that OpenAI recognizes MCP as a viable standard for agent-to-tool communication.

On the MCP side, the protocol now supports Streamable HTTP transport, which makes remote MCP servers look more like traditional HTTP services. An MCP server behind a Streamable HTTP endpoint is not far architecturally from a REST API, though it still uses JSON-RPC internally rather than REST conventions.

The likely outcome is that both patterns coexist. OpenAPI schemas will remain the standard way to describe REST APIs, and GPT Actions (or similar mechanisms) will continue to be the simplest bridge between those APIs and AI models in OpenAI’s ecosystem. MCP will grow as the cross-vendor standard for tool integration, especially in agentic and multi-model architectures. Platforms like Quickchat AI that support both HTTP Request Actions and Remote MCP Actions give you the flexibility to use whichever approach fits your specific integration.