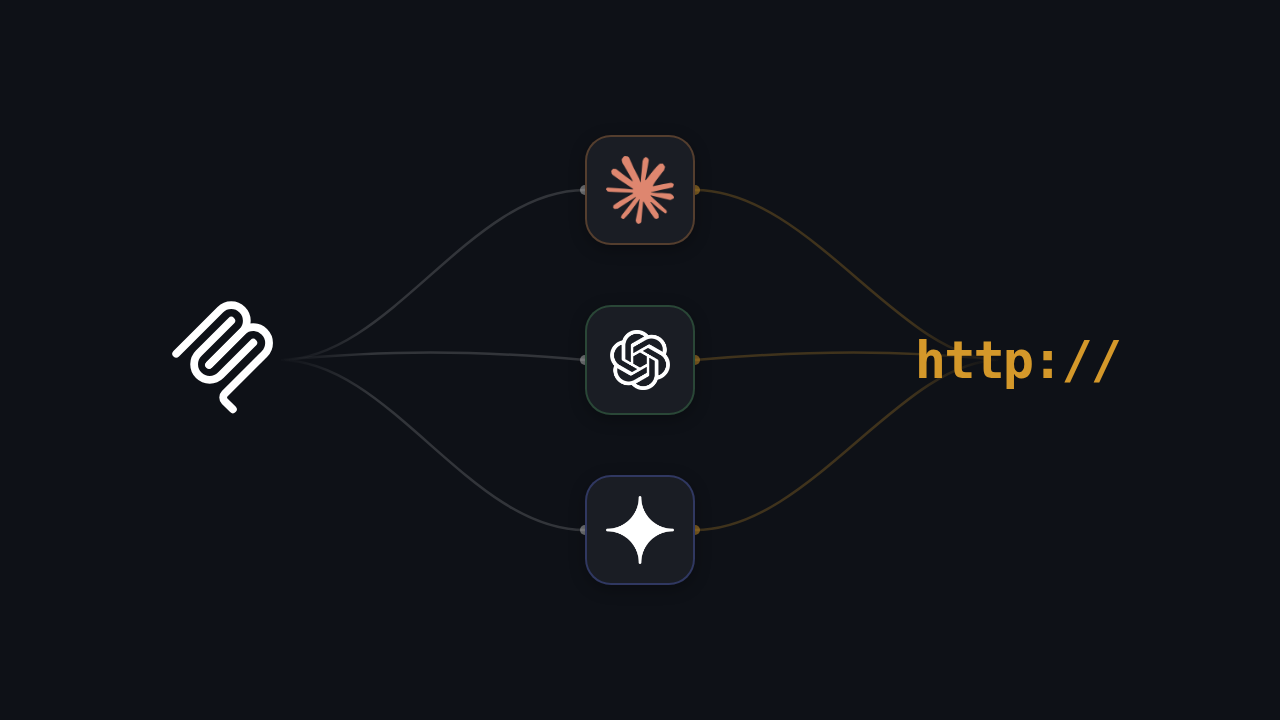

When connecting an AI agent to external tools and APIs, there are two broad approaches: use Model Context Protocol (MCP) as an abstraction layer, or call HTTP APIs directly. Both work. They make different trade-offs around flexibility, complexity, performance, and portability.

This post compares the two approaches with concrete code examples, latency analysis, and a decision framework for choosing between them.

The two approaches

Direct HTTP integration

The AI application (or its backend) calls external APIs directly using standard HTTP requests. The developer writes code to construct requests, handle authentication, parse responses, and feed results back to the LLM.

┌──────┐ ┌────────┐ ┌─────────────┐

│ User │────▶│ AI App │──── HTTP request ─────▶│ Weather API │

└──────┘ │ │◀─── JSON response ─────│ │

│ │ └─────────────┘

│ │── result as context ──▶ LLM

│ │◀── formatted answer ───

└───┬────┘

│

▼

User gets answerMCP integration

The AI application connects to an MCP server that wraps the external API. The application uses the MCP protocol to discover available tools, call them by name, and receive results. The MCP server handles the actual HTTP calls to the external API.

┌──────┐ ┌───────────────────┐ ┌────────────┐ ┌──────────┐

│ User │────▶│ AI App/MCP Client │── JSON-RPC ─▶│ MCP Server │────▶│ HTTP API │

└──────┘ │ │◀─ JSON-RPC ──│ │◀────│ │

│ │ └────────────┘ └──────────┘

│ │── result as context ──▶ LLM

│ │◀── formatted answer ───

└─────────┬─────────┘

│

▼

User gets answerThe key difference: with direct HTTP, the AI application owns the API integration code. With MCP, the MCP server owns it, and the AI application just calls tools by name.

Code comparison

Let’s compare both approaches for a practical example: fetching a GitHub user’s repositories.

Direct HTTP integration

import httpx

async def get_user_repos(username: str, token: str) -> list[dict]:

"""Fetch repositories for a GitHub user."""

async with httpx.AsyncClient() as client:

response = await client.get(

f"https://api.github.com/users/{username}/repos",

headers={

"Authorization": f"Bearer {token}",

"Accept": "application/vnd.github.v3+json",

"X-GitHub-Api-Version": "2022-11-28"

},

params={"sort": "updated", "per_page": 10}

)

response.raise_for_status()

repos = response.json()

return [

{

"name": r["name"],

"description": r["description"],

"stars": r["stargazers_count"],

"language": r["language"],

"url": r["html_url"]

}

for r in repos

]To integrate this with an LLM, you also need:

- A tool definition in whatever format the LLM expects (OpenAI function calling schema, Claude tool use schema, etc.)

- Code to parse the LLM’s tool call, invoke

get_user_repos, and inject the result back into the conversation - Error handling for HTTP failures, rate limits, and auth expiration

MCP server

from mcp.server.fastmcp import FastMCP

import httpx

mcp = FastMCP("github-server")

GITHUB_TOKEN = os.environ["GITHUB_TOKEN"]

@mcp.tool()

async def get_user_repos(username: str) -> str:

"""Fetch the 10 most recently updated repositories for a GitHub user.

Args:

username: GitHub username to look up

"""

async with httpx.AsyncClient() as client:

response = await client.get(

f"https://api.github.com/users/{username}/repos",

headers={

"Authorization": f"Bearer {GITHUB_TOKEN}",

"Accept": "application/vnd.github.v3+json",

"X-GitHub-Api-Version": "2022-11-28"

},

params={"sort": "updated", "per_page": 10}

)

response.raise_for_status()

repos = response.json()

lines = []

for r in repos:

stars = r["stargazers_count"]

lang = r["language"] or "unknown"

lines.append(f"- {r['name']} ({lang}, {stars} stars): {r['description'] or 'No description'}")

return "\n".join(lines)

if __name__ == "__main__":

mcp.run(transport="stdio")The MCP version encapsulates the API call, authentication, and response formatting inside the server. Any MCP-compatible client can use this tool without knowing anything about the GitHub API.

What changes for the AI application

| Concern | Direct HTTP | MCP |

|---|---|---|

| Tool definition | Written in the LLM’s native format (OpenAI schema, etc.) | Automatically generated from MCP server |

| Authentication | Managed by the AI app | Managed by the MCP server |

| Response parsing | AI app extracts and formats data | MCP server returns pre-formatted result |

| Error handling | AI app handles HTTP errors | MCP server handles errors, returns structured error messages |

| Adding a new tool | Write new code in the AI app | Connect to a new MCP server (or add a tool to existing server) |

| Switching LLM | Rewrite tool definitions for new LLM format | No changes needed |

Performance comparison

MCP adds a layer between the AI application and the external API. That layer has a cost.

Latency

For stdio transport (local MCP servers), the overhead is minimal: process spawning at startup (one-time, typically under 100ms) and inter-process communication per message (sub-millisecond on modern systems). In practice, the external API call dominates total latency.

For remote MCP servers (Streamable HTTP or SSE), you add one HTTP round-trip between the client and MCP server, plus the MCP server’s HTTP round-trip to the external API. If the MCP server and external API are co-located, this overhead is small (single-digit milliseconds). If they are in different regions, it compounds.

| Setup | Approximate added latency |

|---|---|

| Local MCP server (stdio) | < 1ms per tool call |

| Remote MCP server (same region as API) | 1-5ms per tool call |

| Remote MCP server (different region from API) | 10-50ms per tool call |

For most AI agent use cases, where the LLM inference takes 500ms-5s, the MCP overhead is negligible.

Connection management

Direct HTTP: Your application manages connections to each external API. This means handling connection pools, retry logic, and rate limiting per API.

MCP: Your application manages one connection per MCP server. The server manages the external API connection. If you have 10 tools spread across 3 MCP servers, you manage 3 connections instead of 10.

When to use MCP

Multiple tool integrations

If your AI application needs to connect to 5+ external services, MCP reduces the integration burden. Instead of writing and maintaining custom API clients for each service, you connect to MCP servers (many of which are available as open-source projects).

Portability across AI platforms

If you build MCP servers for your tools, those servers work with any MCP client: Claude Desktop, Cursor, Windsurf, custom applications, and platforms like Quickchat AI. With direct HTTP, you would need to re-implement tool definitions for each platform’s format.

Dynamic tool discovery

MCP supports runtime tool discovery through tools/list. An AI application can connect to a server and learn what tools are available without any hardcoded configuration. This is useful for scenarios where tools change frequently or are user-configurable.

Separation of concerns

If different teams own different tools, MCP provides a clean boundary. The team that owns the Salesforce integration builds and maintains the Salesforce MCP server. The AI application team connects to it without needing to understand Salesforce’s API. Updates to the Salesforce API are handled in the MCP server without touching the AI application.

When to use direct HTTP

One or two integrations

If your AI agent only needs to call one API (say, a database lookup), the overhead of running an MCP server is unnecessary. A direct HTTP call is simpler and has fewer moving parts.

Maximum control over requests

MCP servers abstract away the HTTP details. If you need precise control over request construction (specific headers, query parameters, request signing, or non-standard authentication), direct HTTP gives you that control without fighting an abstraction layer.

Latency-sensitive operations

If every millisecond matters (e.g., real-time voice agents where tool calls must complete within strict time budgets), removing the MCP layer eliminates one hop.

Non-AI HTTP calls

If the HTTP call is not triggered by an AI agent (e.g., a backend cron job that syncs data), MCP is irrelevant. MCP is specifically designed for AI-to-tool communication. Regular backend services should use regular HTTP clients.

Hybrid approach

Many production systems use both. The pattern:

- MCP for tool integrations that the AI agent calls during conversations (CRM lookups, ticket creation, knowledge base queries)

- Direct HTTP for internal backend operations, webhooks, and system-to-system communication

This is how Quickchat AI works internally. The platform supports both HTTP Request Actions (direct API calls with parameter templating) and Remote MCP Actions (connections to external MCP servers). You choose the right approach for each integration:

| Integration type | Recommended approach |

|---|---|

| Simple API call with known parameters | HTTP Request Action |

| Complex integration with multiple related tools | Remote MCP Action |

| Third-party service with an existing MCP server | Remote MCP Action |

| Internal API with custom authentication | HTTP Request Action |

| User-configurable tool | Remote MCP Action |

For a detailed comparison of how this works in practice, including the differences between OpenAI’s GPT Actions and MCP, see GPT Actions vs MCP.

Decision framework

Use this flowchart to choose:

- Is the AI agent calling the tool? If no (it’s a backend operation), use direct HTTP.

- How many external tools do you need? If 1-2, direct HTTP is simpler. If 3+, consider MCP.

- Does a pre-built MCP server exist for the service? If yes, using it saves development time.

- Do you need the integration to work across multiple AI platforms? If yes, MCP provides portability.

- Is latency critical (sub-100ms budget for tool calls)? If yes, benchmark MCP overhead and decide accordingly.

- Do different teams own different integrations? If yes, MCP’s server-per-integration model helps with ownership boundaries.

In practice, if you are building an AI agent that needs to interact with external services, MCP is increasingly the default choice. The ecosystem of pre-built servers is large enough that you can often get started without writing any server code at all.

Further reading

- The Complete Guide to Model Context Protocol (MCP): Comprehensive MCP reference

- How Model Context Protocol works. MCP Explained: MCP introduction

- GPT Actions vs MCP: Comparison with OpenAI’s approach

- MCP Specification: The official protocol spec

- HubSpot AI Actions: Example of HTTP-based AI actions in practice