Deploying a chatbot is the easy part. Knowing whether it is actually working requires measurement. Most chatbot deployments fail not because the technology is bad, but because nobody defined what “working” means or tracked the right metrics to find out.

This guide covers the KPIs that matter for chatbot performance, how to calculate them, what benchmarks to aim for, and how to use the data to improve your bot over time.

The core KPIs

There are dozens of metrics you could track. The ones below are the most actionable. They split into two categories: operational metrics (is the bot doing its job?) and quality metrics (is the bot doing its job well?).

Operational metrics

| KPI | What it measures | Formula | Good benchmark |

|---|---|---|---|

| Containment rate | % of conversations fully handled by the bot without human handoff | (bot-only conversations / total conversations) * 100 | 60-80% |

| Deflection rate | % of potential support tickets prevented by the bot | (conversations resolved by bot / (bot resolutions + tickets created)) * 100 | 40-60% |

| Handoff rate | % of conversations escalated to a human agent | (escalated conversations / total conversations) * 100 | 20-40% |

| Average resolution time | Time from first user message to issue resolution | Mean or median of (resolution timestamp - first message timestamp) | Under 2 minutes for simple queries |

| First response time | Time from user’s first message to bot’s first reply | Mean of (first bot reply timestamp - first user message timestamp) | Under 5 seconds |

| Conversations per day/week | Volume of bot interactions over time | Count of conversations per time period | Depends on deployment |

| Fallback rate | % of messages where the bot did not understand the user and gave a generic response | (fallback responses / total bot responses) * 100 | Under 15% |

Quality metrics

| KPI | What it measures | Formula | Good benchmark |

|---|---|---|---|

| CSAT (Customer Satisfaction) | User satisfaction with the bot interaction | (positive ratings / total ratings) * 100 | Above 80% |

| Goal completion rate | % of conversations where the user achieved what they came for | (conversations with goal completed / total conversations) * 100 | Above 70% |

| Conversation rating | Average rating users give to their bot interaction | Mean of all user ratings (1-5 scale) | Above 4.0 |

| Sentiment score | Overall sentiment of user messages during the conversation | Positive/negative/neutral classification or continuous score | Majority positive or neutral |

| Topic accuracy | Whether the bot correctly identified the user’s intent | Manual review or automated classification check | Above 90% |

Containment rate: the most important metric

Containment rate measures whether the bot can handle a conversation from start to finish without a human stepping in. It is the single most important operational metric because it directly correlates with cost savings.

If your bot has a containment rate of 70%, that means 7 out of 10 conversations are fully automated. The remaining 3 are handed off to human agents. Every percentage point improvement in containment rate translates directly to fewer agent hours needed.

How to calculate it

Containment rate = (conversations resolved by bot alone / total conversations) * 100A “resolved” conversation means the user got their answer or completed their task. A conversation that ends with the user leaving in frustration is not resolved, even if no human took over. This distinction matters. Some bots report high containment rates simply because users gave up, which is not the same thing as resolution.

What affects containment rate

- Knowledge base coverage: If the bot does not have information about a topic, it cannot answer questions about it. Gaps in the knowledge base are the most common cause of low containment.

- Intent recognition accuracy: If the bot misunderstands what the user is asking, it either gives the wrong answer (user unsatisfied) or escalates unnecessarily (lower containment).

- Conversation design: How the bot handles ambiguity, follow-up questions, and multi-turn conversations affects whether it can fully resolve an inquiry.

- Action capabilities: If the bot can look up order status, create tickets, or perform other actions, it can resolve more types of requests without human help.

Deflection rate vs. containment rate

These two metrics are related but measure different things.

Containment rate measures how many conversations the bot resolves on its own out of all conversations it handles.

Deflection rate measures how many potential support tickets the bot prevents from being created. This includes both:

- Conversations the bot resolves (the user would have created a ticket otherwise)

- Conversations where the bot provides enough information that the user does not need to follow up

Deflection rate is harder to measure accurately because it requires estimating what would have happened without the bot. A common approach is to compare ticket volume before and after bot deployment, controlling for traffic changes.

CSAT: measuring quality, not just throughput

A bot that resolves 90% of conversations but leaves users frustrated is not a success. Customer Satisfaction (CSAT) score captures the qualitative side.

Collection methods

| Method | Pros | Cons |

|---|---|---|

| Post-conversation survey (thumbs up/down or 1-5 stars) | Simple, high completion rate | Binary data, no context |

| In-conversation rating prompt | Can ask at specific moments | May interrupt the flow |

| Follow-up email survey | More detailed feedback possible | Low response rate (5-15%) |

| Sentiment analysis of messages | No user action required, covers all conversations | Less accurate than explicit feedback |

The most common approach is a thumbs up/down prompt at the end of the conversation. This gives you a binary satisfaction signal with minimal friction. Quickchat AI includes conversation rating as a built-in feature, so you can track this without building custom survey logic.

CSAT benchmarks

Industry benchmarks for chatbot CSAT vary by use case:

| Use case | CSAT benchmark |

|---|---|

| Simple FAQ / informational | 85-95% |

| Order status / account lookup | 75-85% |

| Technical troubleshooting | 60-75% |

| Sales / lead qualification | 70-80% |

| General customer support | 75-85% |

If your CSAT is below these ranges, the issue is usually one of: incorrect answers (knowledge base problem), unhelpful responses (prompt engineering problem), or lack of handoff when needed (escalation logic problem).

Tracking topic distribution

Knowing what users are asking about is as important as knowing how well the bot answers. Topic classification groups conversations by subject matter, which helps you:

- Identify knowledge gaps (frequent topic with low resolution rate = missing content)

- Prioritize content creation (most common topics should have the best coverage)

- Detect emerging issues (sudden spike in a topic = potential product problem)

- Allocate human agent resources (topics the bot handles poorly need more human coverage)

Most AI chatbot platforms classify topics automatically using the conversation content. Quickchat AI uses AI-based topic classification that categorizes conversations without requiring manual tagging rules.

Example topic report

| Topic | Conversations | Containment rate | Avg CSAT |

|---|---|---|---|

| Pricing questions | 342 | 82% | 4.2 |

| Account access issues | 218 | 45% | 3.1 |

| Product feature questions | 189 | 78% | 4.0 |

| Billing disputes | 87 | 22% | 2.8 |

| Integration setup | 64 | 61% | 3.7 |

This table immediately shows that “account access issues” and “billing disputes” need attention. Low containment and low CSAT in those areas suggest either missing bot capabilities or content gaps.

Sentiment analysis

Sentiment analysis classifies the emotional tone of user messages as positive, negative, or neutral. This is distinct from CSAT because it measures sentiment throughout the conversation, not just the outcome.

A conversation might end with a positive CSAT rating, but sentiment analysis could reveal that the user was frustrated for the first three exchanges before the bot finally understood their question. That mid-conversation friction is valuable information for improving the bot.

Sentiment tracking is most useful when aggregated over time. A rising trend in negative sentiment across all conversations might indicate a product issue (users coming in already frustrated) rather than a bot issue.

Outcome tracking

Outcome tracking goes beyond containment to measure whether the conversation achieved a specific business goal. Common outcomes to track:

| Outcome | How to measure | Example |

|---|---|---|

| Lead captured | Bot collected contact information or qualified a lead | User provided email and company size |

| Ticket created | Bot created a support ticket via an action/integration | HubSpot ticket created with issue details |

| Sale assisted | Bot helped the user toward a purchase decision | User clicked through to pricing or checkout |

| Issue resolved | User’s problem was solved without escalation | User confirmed resolution or positive CSAT |

| Handoff completed | Bot successfully transferred to a human agent | Agent picked up the conversation |

In Quickchat AI, outcome tracking is available on the Professional plan and above. The AI classifies conversation outcomes automatically, and you can define custom outcome categories that match your business logic.

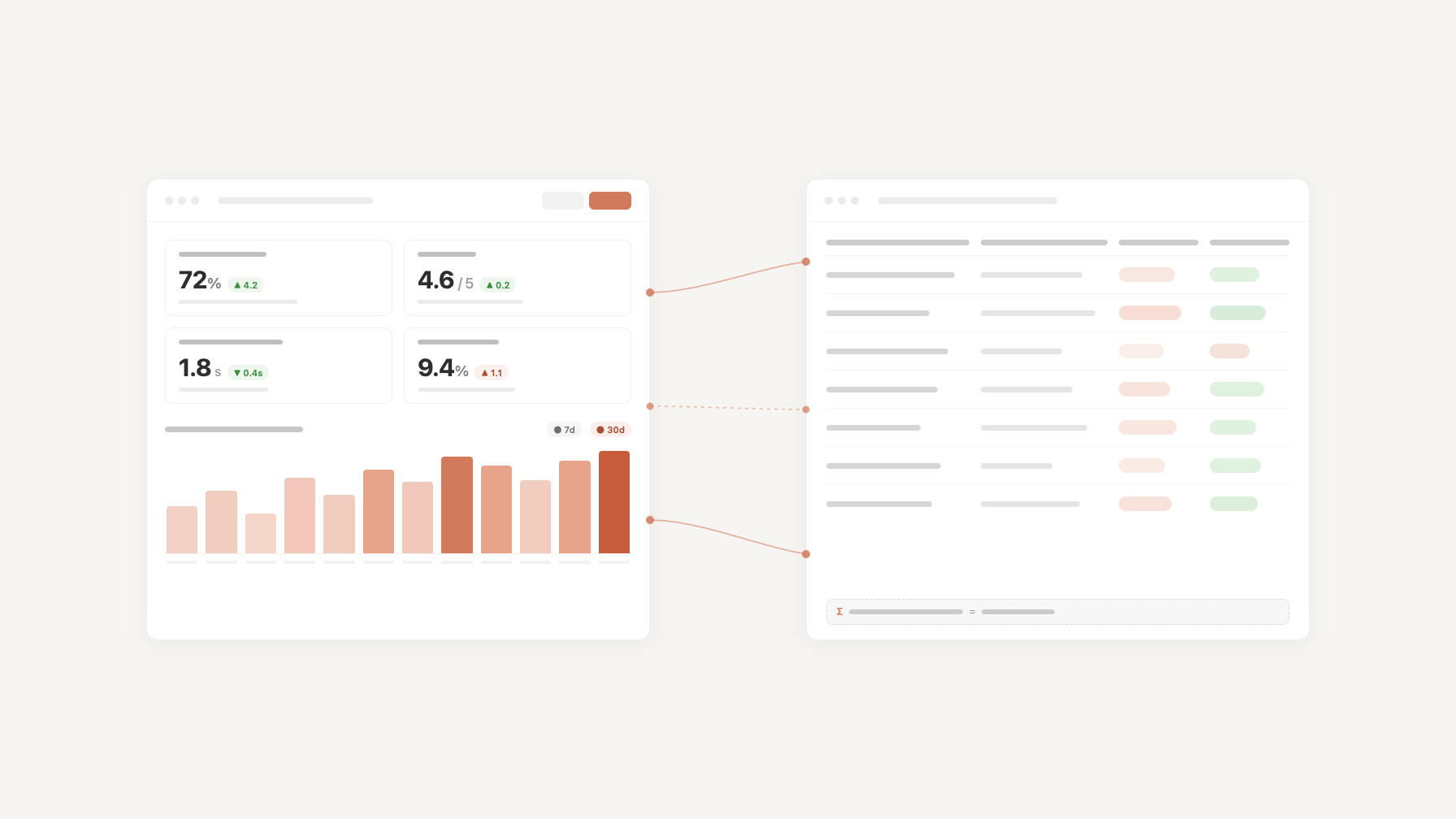

Setting up a KPI dashboard

A KPI dashboard should answer three questions at a glance:

- Is the bot working? (containment rate, fallback rate, first response time)

- Are users satisfied? (CSAT, sentiment trend)

- What needs improvement? (topic breakdown with per-topic containment and CSAT)

Minimum viable dashboard

If you are just starting, track these five metrics:

- Containment rate (daily)

- Total conversations (daily)

- CSAT score (weekly average)

- Top 5 topics by volume (weekly)

- Fallback rate (daily)

These five give you enough signal to identify problems and prioritize improvements.

Data sources

| Data point | Source |

|---|---|

| Conversation logs | Your chatbot platform (Quickchat AI dashboard, Intercom, etc.) |

| CSAT ratings | Built-in rating feature or post-chat survey tool |

| Topic classification | Platform’s built-in classifier or a separate NLP pipeline |

| Ticket volume | Your helpdesk (HubSpot, Zendesk, Freshdesk) |

| Agent handle time | Helpdesk or workforce management tool |

Using KPIs to improve your bot

Collecting metrics is pointless if you do not act on them. Here is a systematic approach:

Weekly review cycle

- Check containment rate trend: Is it going up, down, or flat? If dropping, check the fallback rate and topic distribution to find the cause.

- Review low-CSAT conversations: Read actual conversation transcripts where users rated the experience poorly. Look for patterns: wrong answers, awkward phrasing, premature escalation, or missing escalation.

- Identify high-volume/low-containment topics: These are your highest-impact improvement targets. Add knowledge base articles, improve existing ones, or add AI Actions to handle them.

- Check sentiment trends: A shift toward negative sentiment without a corresponding drop in CSAT might indicate building frustration that has not yet shown up in ratings.

- Compare week over week: Track whether changes you made last week had the expected effect.

Common improvement actions

| Problem | KPI signal | Fix |

|---|---|---|

| Bot does not know the answer | High fallback rate for a topic | Add knowledge base content |

| Bot gives wrong answers | Low CSAT for a topic with high containment | Review and correct knowledge base articles |

| Bot escalates too quickly | Very low containment, high handoff rate | Adjust escalation thresholds, improve prompt |

| Bot does not escalate when it should | Low CSAT, users complaining about bot loops | Add escalation triggers for specific intents |

| Users asking about things the bot cannot do | High fallback rate for action-related requests | Add AI Actions (e.g., order lookup, ticket creation) |

Cost per conversation

The ultimate operational KPI ties everything back to money. Cost per conversation measures how much each bot interaction costs you.

Cost per bot conversation = (monthly platform cost + API costs) / total conversationsFor a Quickchat AI Essential plan at $99/month with 15,000 messages included, if your bot averages 6 messages per conversation (3 user, 3 bot), that is roughly 2,500 conversations per month. Cost per conversation: about $0.04.

Compare this to the cost of a human agent handling the same conversation. If an agent handles 4 conversations per hour at $20/hour fully loaded cost, that is $5 per conversation. The bot is over 100x cheaper per interaction, even before accounting for the agent’s time being freed up for complex cases.

The calculation gets more nuanced with usage-based pricing or if you are running your own models, but the order-of-magnitude difference between bot and human cost per conversation holds in nearly all scenarios. For more on chatbot costs, see our detailed cost guide.

Further reading

- How Much Does a Chatbot Really Cost?: Full pricing breakdown

- 24/7 Customer Support AI Playbook: CSAT improvement strategies

- Customer Support Scalability: Scaling support operations with AI